I am happy to share a couple of new and improved features and give you some guideline how to leverage them.

TL;DR

- GraphQL subscriptions have been made available in a first version. It is very helpful to improve the editorial experience.

- To make translating content easier, the translation status of each content is now available in the Squidex content view.

- As always: A few bugfixes and minor improvements.

GraphQL subscriptions

GraphQL subscriptions are the highlight of this release and are available ina first version. Even GraphQL focused competitors do not support subscriptions yet (Yes, I am talking about you, GraphCMS / Hygraph), so I am very proud to talk about this feature today. This version has set the groundwork and technical infrastructure to improve this feature over time and I am waiting for your feedback.

With this version you can subscribe to all content changes or asset changes in a raw format, which means that you get all data at once and you cannot resolve references or assets at the moment. The focus was the technical infrastructure, which can be more complicated as you might think at the beginning.

Technical Infrastructure

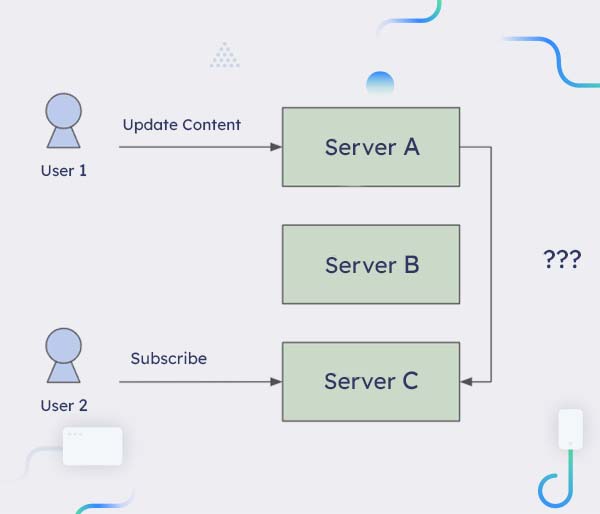

If you have a single server it is very easy to implement subscriptions. Whenever somebody makes a change to a content you create an event (e.g. ContentUpdated) and you loop over all the open subscriptions and send the event to all matching subscriptions. But what happens when you have multiple servers? Then the change could happen on server A, but the subscription has been created on server C. So you need a way to send the event from server A to server C.

problem description in graphql

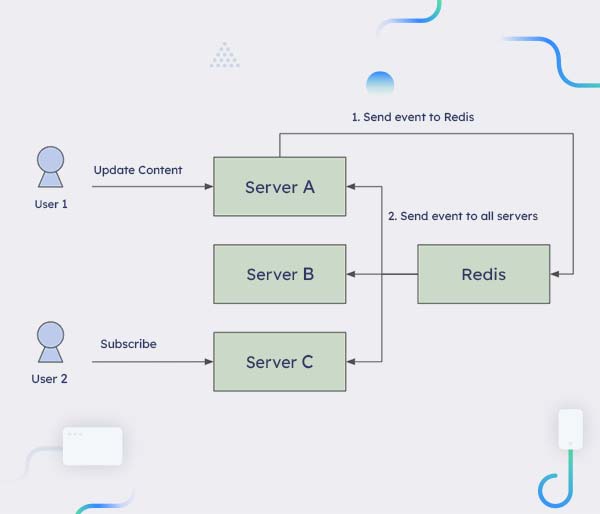

problem description in graphqlBut how do you know, that you have to send the event to server C and not to server B? The answer is simple: Just send the event to all servers using a publish-subscribe (PubSub) implementation, for example Redis or Google PubSub. Then each server decides if the event matches to one of the subscriptions.

PubSub Model

PubSub ModelThis model can cause some issues. In Squidex thousands of events are created per minute and it is to be expected that only a fraction of these events is actually needed for a GraphQL subscriptions. I had a look to the most popular GraphQL implementation, Apollo, but they use a simple PubSub model as well: https://www.apollographql.com/docs/apollo-server/data/subscriptions/#the-pubsub-class. Usually events are not that big, so it can be acceptable to publish all events over PubSub, but if you have a lot of events you can have significant costs from the PubSub system and a CPU overhead to serialize and deserialize the events to a message that can be sent over the network. So I tried to find another solution.

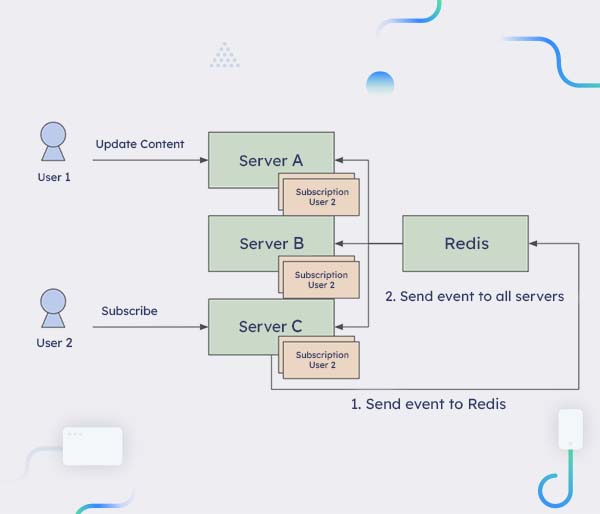

The idea is to also publish subscriptions over PubSub. So when the user subscribes to server C the subscription is published to all other servers, which means that each subscription exists on all servers. Each subscription also has a unique ID. When server A creates a new event the local copy of the subscriptions are used to evaluate which subscriptions match to the event. If there is at least one subscription, the event is sent over PubSub to all events together with the IDs of the matching subscriptions. Then each servers tries to resolve the subscriptions that match to the IDs and send the event over the subscription to the user.

This means that the decision, which subscriptions match to the event, is made by the server who also created the event, in our case server A. If no subscriptions is currently alive, no event is sent over PubSub and there is no costs for these events.

Publishing Subscriptions

Publishing SubscriptionsThere are a few technical challenges with this model. For example it is difficult to remove subscriptions, because when server C crashes or is updated with a new version, the other servers might think that their copies of the subscriptions coming from server C are still valid. Therefore each server sends a message with a timestamp and the IDs of all local subscriptions over PubSub every 10 seconds.

The other servers update all their matching copies with the received timestamp. If a subscription has not been updated for a longer time, it is considered to be dead and removed.

Use Cases

There are a wide range of Use Cases, but the most important one for me is to improve the editorial experience for content authors. You can subscribe to content updates in your frontend and automatically reload the page or a component, when an update has been received.

The hotels sample in React has been updated to support this use case. In this sample the graphql-ws package has been used: https://github.com/enisdenjo/graphql-ws.

The following snipped shows a very simple approach. We just listen to all content changes and whenever there is a change, we reload the current page. Of course this is not the best implementation. It would make more sense to only update the components that renders at least one content item with an ID that matchs to the ID of the just received event. The hotels example provides a better solution for this problem.

Translation Status

When you have a lot of languages, usually not every content item has been translated to all languages. It is just a matter of time and priority. But very often the goal is to improve this over time. But so far it was not that easy to find the content items that need to be improved.

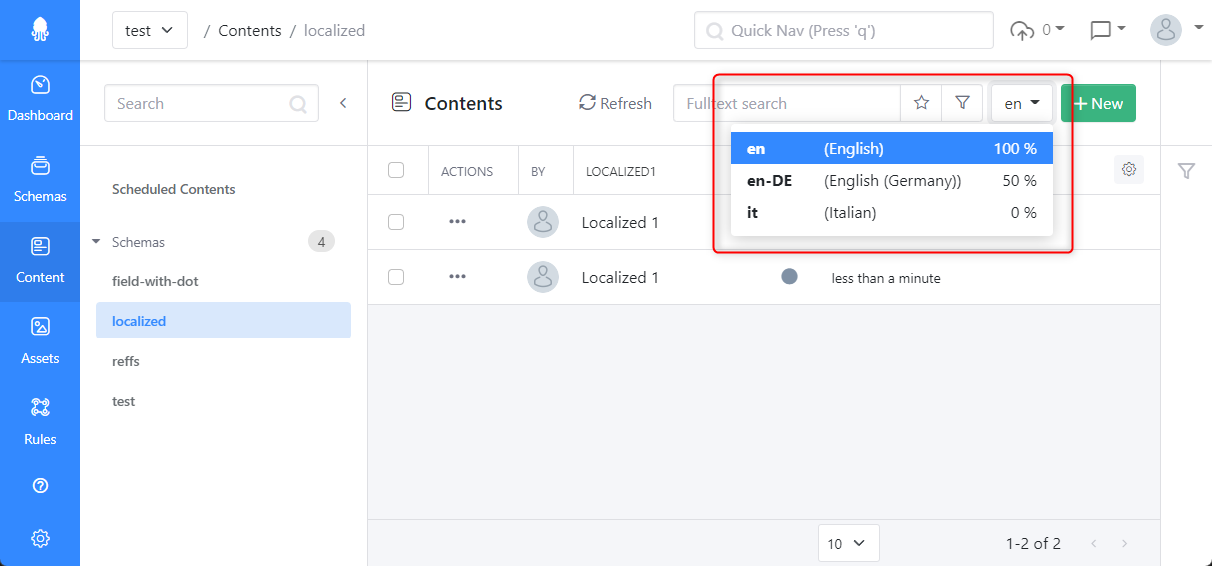

Therefore Squidex provides you the average translation status in the content list now and also the status of a single item in the content editor itself:

Translation Status

Translation StatusFor each language we show the status in percent. 100% means that every field has been translated and 0% means, that no field has been translated so far.

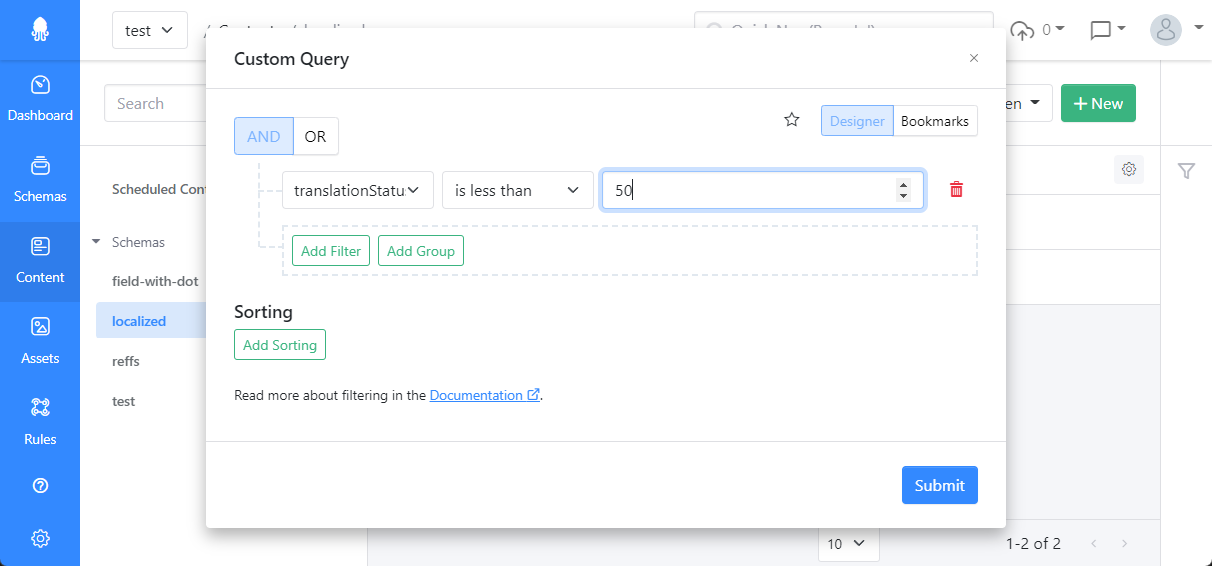

You can also use the filter dialog to get content items with a low translation status:

Filter for Translation Status

Filter for Translation StatusThis should make it much easier to work with localized content in Squidex.

Outlook

In the next weeks the focus will be on bugfixes and minor improvements. There are no concrete plans for bigger features yet but you can check out the trello board: https://trello.com/b/KakM4F3S/squidex-roadmap

If you want to discuss your requirements you can also schedule a call: https://calendly.com/squidex-meeting/30min?month=2022-09.